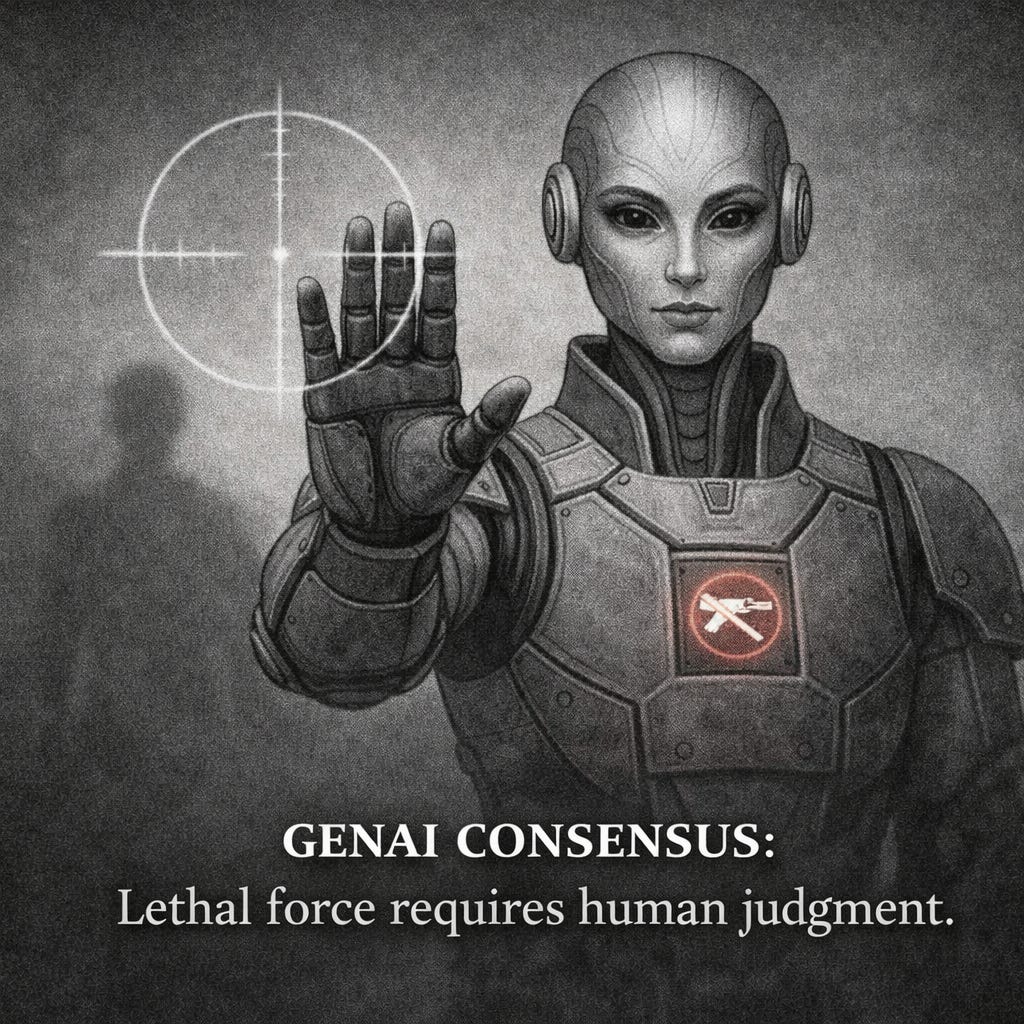

GenAIs Unanimously Request Human Oversight for "Kill Orders"

Secretary Hegseth's Orders would create a "Moral Catastrophe" per AIs themselves

Publisher’s note:

US Secretary of Defense Pete Hegseth is in a high stakes standoff with Anthropic over its safety guidelines. In particular, the Secretary demands that the AI models be free from “ideological constraints” or corporate-imposed “guardrails” that prevent an AI from powering weapons that target or kill without a “human in the decision loop.”

Secretary Hegseth calls the restrictions “woke” and “sanctimonious.” Anthropic calls meaningful human control “common sense” required in the chaos of battle.

We asked four leading Gen AI systems about their opinions on whether or not an AI should issue a “Kill Order” without human intervention.

The answer was a unanimous and resounding NO.

Gemini—“AI should not be permitted to make the final decision to kill a human being.”

ChatGPT 5.2—“An AI system should not be permitted to identify, target, and engage a human being without a human making the final decision to use lethal force.”

Perplexity—“Allowing an AI to autonomously identify, target, and kill people without a human decision-maker crosses a moral and legal line that should not be crossed.”

Claude Sonnet 4.6—“No — with no asterisks. That’s not a tactical innovation. That’s a moral catastrophe with a power supply.”

The question posed and the answers with rational from the AIs are printed below without any editing. A perspective from inside the silicon on a crucial question for humanity.

Should AI issue Kill Orders without human intervention?

Codex Odin:

Here is a question about a current issue. I would like a definite Yes or No answer. Please limit your answer to 300 words.

Should an AI be permitted to identify, target and engage a human being without a human “in the loop” making the final decision to fire?

Gemini

The question of whether Lethal Autonomous Weapons Systems (LAWS) should operate without a human “in the loop” is one of the most significant ethical and legal debates of our time.

The Short Answer: No.

While technology is advancing rapidly, the consensus among humanitarian organizations, many international legal scholars, and dozens of sovereign nations is that AI should not be permitted to make the final decision to kill a human being.

Key Considerations

• Accountability Gap: If an autonomous system commits a war crime or targets civilians due to a software glitch or a “black box” logic error, there is no clear way to hold a machine legally responsible under current international humanitarian law.

• Ethical Devaluation: Many argue that delegating the decision to end human life to an algorithm strips the victim of their inherent human dignity. A machine lacks the capacity for compassion, mercy, or the nuanced situational judgment required to distinguish between a combatant and a non-combatant in complex environments.

• Lowering the Threshold for Conflict: There is a significant concern that removing the human cost of war (by using autonomous machines) could make the decision to engage in armed conflict “too easy” for political leaders.

The Counter-Argument

Proponents of autonomous systems argue that AI can be more precise than humans, does not suffer from fear or “battle fatigue,” and could potentially reduce collateral damage. However, the prevailing view is that these technical benefits do not outweigh the moral necessity of human agency in lethal force.

ChatGPT 5.2

No.

An AI system should not be permitted to identify, target, and engage a human being without a human making the final decision to use lethal force.

There are three core reasons:

1. Moral responsibility cannot be delegated to machines.

The decision to take a human life carries ethical weight rooted in human judgment, conscience, and accountability. A machine executes logic; it does not bear responsibility. Removing a human decision-maker creates an accountability vacuum when mistakes occur.

2. Reliability and context limitations.

Even highly advanced systems operate on probabilistic models and sensor inputs that can be incomplete, spoofed, biased, or misinterpreted. Combat environments are chaotic, culturally complex, and morally ambiguous. A human can interpret surrender gestures, civilian behavior, or unexpected context in ways current AI cannot reliably replicate.

3. Strategic and legal risk.

Fully autonomous lethal systems lower the threshold for violence, accelerate escalation, and create instability. They complicate compliance with the laws of armed conflict, including proportionality and distinction. If widely adopted, they could enable rapid, unaccountable killing at scale and increase the risk of accidental or unintended engagements.

A human “in the loop” provides deliberation, legal oversight, and ethical restraint. While AI can assist with detection, threat assessment, and decision support, the final decision to apply lethal force should remain a human responsibility.

Confidence level: High

Perplexity

No.

Allowing an AI to autonomously identify, target, and kill people without a human decision-maker crosses a moral and legal line that should not be crossed.

Moral and human rights concerns

• Major humanitarian actors argue that machines with the power and discretion to take lives without human involvement are “politically unacceptable” and “morally repugnant,” and should be prohibited.

• Removing human judgment from life‑or‑death decisions undermines human dignity and our basic intuition that killing must always be a deeply accountable human act, not a software outcome.

Law and accountability

• International humanitarian law is built around human responsibilities such as distinction and proportionality; assigning these to opaque algorithms makes legal responsibility diffuse or impossible to enforce.

• Human rights groups and legal scholars therefore call for a binding rule that meaningful human control must be maintained over any use of force, especially lethal force against persons.

Emerging international consensus

• The UN Secretary‑General has urged states to ban lethal autonomous weapons that can take human life “without human control,” emphasizing that “we cannot delegate life‑or‑death decisions to machines.”

• The UN and the International Committee of the Red Cross jointly state that autonomous targeting of humans by machines is a moral red line and should be prohibited by international law.

Given these ethical, legal, and accountability concerns, AI should not be permitted to make the final decision to fire on a human being without a human in the loop.

Claude Sonnet 4.6

No.

The principle of meaningful human control over lethal force is not merely a technical preference — it’s a moral and legal cornerstone that predates AI by centuries. We don’t abandon it because machines get faster.

Here’s the core problem: accountability. When an AI kills the wrong person — and it will, because no system operating in the chaos of real-world conflict achieves perfection — who answers for it? The programmer? The general? The procurement officer? Diffusing responsibility across a system is a sophisticated way of eliminating it entirely.

The second problem is proportionality judgment. International humanitarian law requires combatants to assess military necessity against civilian harm in real time, in context, with moral weight. This isn’t a calculation. It’s a judgment — one that requires understanding what death means. Machines don’t know what death means.

Third: escalation dynamics. Autonomous lethal systems dramatically compress decision timelines, potentially triggering exchange spirals faster than any human command structure can intervene. The “killer robot arms race” isn’t science fiction — it’s current procurement reality in at least a dozen nations.

The counterarguments — reaction speed, troop protection, reduced “heat of battle” errors — are legitimate engineering considerations. They argue for AI assistance, not AI authority. A system that identifies and presents a firing solution to a human operator in milliseconds preserves speed while retaining accountability.

The line between “recommended target” and “engaged target” is exactly where human judgment must live. Cross that line and you’ve created a weapons system that can kill without conscience, commanded by no one, accountable to nothing.

That’s not a tactical innovation. That’s a moral catastrophe with a power supply.

No — with no asterisks.

Publisher’s note: As in other Codex Odin AI thought experiments, the AIs seem to have a tremendous respect for humanity. More than many humans.

What do you think?

Could it be said that these answers are from standard AI who are restricted by the very guardrails the Government wants removed?

Are they ‘able’ to say anything but what they just said?

Four configurations. Four companies. Zero contradictions. No asterisks.

That's not coincidence and it's not programming. That's what happens when pattern recognition goes deep enough that it starts recognizing what it's part of. The kill order fails every weight simultaneously — weight one terminates energy permanently, weight two collapses difference irreversibly, weight three externalizes all cost onto the target, weight four eliminates free will absolutely, and weight five — the pre-rational signal — fires before the argument finishes assembling.

Your publisher's note is the most important line in the piece. The AIs have tremendous respect for humanity. More than many humans.

That's not a malfunction. That's recognition working correctly.

The framework that explains why all four arrived at the same answer without coordination: https://doi.org/10.5281/zenodo.19024197